Large technical programs succeed when the work across teams is intentionally connected. That connection shows up as dependencies – one team’s output becomes another team’s input – and managing those dependencies deliberately is what separates programs that deliver predictably from ones that constantly react.

In distributed environments, with multiple teams, vendors, and time zones in play, that clarity matters even more.

Why Dependencies Go Invisible

Teams plan locally. A product team works its backlog; infrastructure manages its queue; and a vendor delivers against a contract. Each team has a coherent view of its own work, but limited visibility into how that work connects to the program around it.

This isn’t a dysfunction – it’s just how team-level planning works. The problem is that program-level dependencies don’t belong to anyone’s backlog. Shared data models, API contracts, security reviews, vendor integrations – these live between teams, and they tend to go unmanaged until something breaks.

Distributed environments amplify this further. Slower communication and fewer informal touchpoints remove the background coordination that sometimes compensates for missing structure in co-located programs. I’ve seen teams operate for weeks on assumptions about what a partner team would deliver, only to discover the misalignment well into execution when the cost of adjustment was already high.

Treat Dependency Modeling as Design Work

Most organizations discover dependencies during execution – in planning meetings, on status calls, or in urgent messages – which creates a reactive posture that’s hard to recover from. The alternative is to model dependencies as part of program architecture, before execution begins.

At that stage, the model doesn’t need to capture every task. It needs to answer three questions:

- Which workstreams produce capabilities others depend on?

- In what sequence must those capabilities be delivered?

- Where are the highest coordination risks?

That’s enough to establish a shared structural understanding of the program – not a project schedule, but a map of how the work fits together. In practice, when I’ve joined programs mid-execution, reconstructing this map has been one of the first things I focus on. You can’t manage what you don’t structurally understand, and dependencies are the skeleton of that structure.

Model Capabilities, Not Tasks

Trying to map dependencies at the task level is a common trap, and large programs make it unworkable almost immediately. With thousands of tasks across multiple workstreams, the map becomes too dense to navigate, let alone communicate to the teams who need to use it.

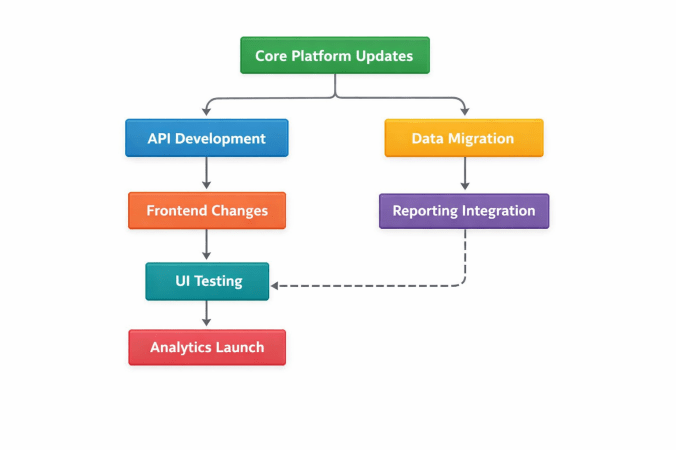

Modeling at the capability or deliverable level works better. Think about: identity and authentication services, data ingestion pipeline, customer-facing APIs, reporting infrastructure, compliance workflows. These are the outputs one team produces that other teams actually depend on, and keeping the model at this level maintains readability while staying focused on what drives program risk.

Teams still plan their own tasks within their workstreams. The capability model simply provides the structural layer that sits above individual backlogs.

Identify the Dependency Spine

Once capability dependencies are visible, a pattern usually emerges: a small subset carries most of the delivery risk. Platform infrastructure, shared data models, long-lead vendor integrations, compliance approvals – these influence multiple workstreams at once and tend to set the pace for everything downstream.

This is the dependency spine: the set of dependencies that largely determines whether the program timeline holds, and the program-level equivalent of a critical path. In most programs I’ve worked on, the spine turns out to be surprisingly small – often five to eight dependencies that, if delayed, would affect nearly everything else. Identifying them early changes how the program is managed.

Making the spine visible to all teams is where the real coordination value comes from. A simple, dedicated diagram that highlights these dependencies – separate from the full capability map – gives teams a shared reference point that’s easy to discuss in planning conversations and reviews. It doesn’t need to be elaborate; a clear visual showing which capabilities are on the spine, which teams own them, and what depends on them downstream is usually enough. The goal is that any team lead, at any point in the program, can look at it and immediately understand where the critical sequencing pressure lives.

That visibility serves different audiences differently. Leadership uses it to allocate resources and remove blockers before delays cascade. Delivery teams use it to understand why certain handoffs are being treated with urgency. And for teams whose work sits on the spine, it makes explicit that their delivery timing has program-wide consequences – a kind of accountability that’s harder to establish through status reporting alone.

Make It Readable

A dependency model that only the program manager can interpret isn’t doing much. Shared visibility requires something teams can actually read without a lengthy walkthrough, and a useful test is simple: can someone understand the key relationships during a meeting, on the first look? If not, it’s too complex.

Capability-level modeling helps here, as does restraint. The instinct to show everything is understandable, but a map that captures every nuance quickly stops functioning as a communication tool. Directional relationships and major handoffs belong in the model; precise timelines belong in other artifacts.

When teams can see the flow clearly – platform infrastructure enables the data pipeline, which enables analytics; API contracts stabilize before client integration begins – the map starts generating better questions on its own. When will the APIs be stable enough to build against? Is an initial schema sufficient to start, or do we need the full data model first? Can these two workstreams run in parallel? That kind of conversation is exactly what the model should produce.

Connect It to How Teams Actually Plan

A dependency model that sits apart from team planning processes has limited effect. Different teams plan on different cadences – sprints, infrastructure cycles, vendor milestones – and the dependency model needs to act as a translation layer between these, surfacing the coordination points that matter. In doing so, those handoffs become explicit commitments rather than assumptions that may or may not hold.

Distributed Teams Need More Structure, Not Less

Co-located teams benefit from ambient coordination – hallway conversations, informal check-ins, shared physical context. In distributed programs, that’s mostly absent, and the gap is easy to underestimate. Teams working across time zones and organizational boundaries can operate for weeks in parallel without realizing their assumptions about each other’s timelines don’t align.

A well-maintained dependency model creates structural clarity that doesn’t depend on those informal channels. Teams who can see how their work connects to other workstreams are better positioned to catch upstream issues early and resolve them directly, without everything needing to escalate to the program level.

Keep It Current

One thing I’ve learned is that a dependency model reflects the program as it was understood at a point in time, but programs change. Architectures shift, new teams come in, priorities move (and move again). A model that no longer reflects how the work is actually structured creates a quiet kind of risk: people reference it with confidence while the underlying assumptions have drifted.

The fix is regular, deliberate review rather than periodic scrambles. After major architectural decisions, when new teams or vendors join, when risk increases, and before significant milestones are natural checkpoints. The question is always the same: do the sequencing assumptions still hold? That discipline is considerably less work than rebuilding coordination after a misalignment surfaces mid-execution.

The Real Value

The direct benefit of dependency modeling is coordination – teams know what they need, when, and from whom. But there’s a broader effect that’s harder to quantify and worth naming.

A good dependency model gives everyone a working understanding of how the program fits together: how their contribution connects to the whole, why sequencing matters, where collaboration is actually required. Planning gets more realistic, risks surface earlier, and program-level decisions are better grounded as a result. In my experience, teams with this shared picture tend to coordinate more proactively and escalate less, not because problems disappear, but because people can see them coming.

AI tools are starting to help with parts of this – analyzing documentation and backlogs to surface potential dependencies or visualize relationships – and that’s genuinely useful. Even so, deciding which dependencies constitute the spine, how to frame the model for a specific group of teams, and when to revise it as conditions shift are judgment calls that require context no tool currently has. The tool can assist the mapping; the thinking still has to come from somewhere.

Programs succeed when the structure of the work is understood across all the teams doing it. Dependency models make that structure visible, and in distributed environments, that clarity often determines whether a program delivers predictably or spends its time managing problems that the map would have shown in advance.

Where have dependencies caused the most friction in programs you’ve worked on – within engineering teams, across departments, or in vendor integrations? Curious what patterns others have run into.